Unmoderated User Testing

A time-efficient and cost-effective method to test end-users.

The nuts and bolts: An unmoderated user test allows the participant to complete tasks and answer questions at their own pace, on their own time, and at a time and location of their choosing. You can ask a user to think aloud, but there is no one to remind her if she forgets.

Let us paint you a picture. The month is July. Your project is on a super tight holiday deadline. There’s five weeks to get the product shipped and out to consumers. So you rush headlong to complete the project, forging the testing phase.

Then the holidays hit. What comes next is one of the largest failures known to man. Your company dedicates a landfill out in Alamogordo, New Mexico for the purpose of burying millions of returned and unsold products. Does this story sound familiar? It should because it is 100% true.

This is what happened with the infamous “E.T. the Extra-Terrestrial” video game for the Atari 2600 — those who guessed correctly, mark yourself 10 points.

The point of the story: if you do not test for your audience — or test at all — you are doomed to failure.

Without an extra set of eyes to look at your product, you are just designing for yourself. Your bias will hinder you from seeing all the problems that could have been easily fixed. Those problems can be the difference between commercial success and catastrophic failure.

User testing is important in making sure that end-users who visit your site are able to navigate and use all the functions therein properly.

The three primary types of user testing:

- In-Person

- Remote

- Asynchronous

Each of these methods have their pros and their cons. But we’re thinking ahead of the curve, setting our sights on using more unmoderated user testing. And here’s why.

USER TESTING METHODS

First, let’s pit these abled warriors of user testing against one another, and let’s see just how they stack up against one another.

In-Person (Moderated) User Testing

This is far and away the most widely known and accepted method of testing, user or otherwise. A proctor sits in on the testing session and asks questions while the participant carries out a task. This allows for a series of instantaneous give-and-take feedback, as well as qualitative information transactions. With this type of test, you can quickly think through your usability flaws and correct them as you see fit, changing them for the next round of participants.

Amid the positive aspects of moderated testing, there lurk a few obstacles to this model of testing.

- Expense. This is a serious Achilles’ heel, both in time and expense. Travel expenses, lab rentals, test equipment and time away from your business are just a few of the costs. In a small startup environment, these resources are essential to your everyday operations and cannot be bandied about.

- Location. More often than not, these tests are carried out in “laboratory settings” or other types of settings that can be regarded as “unnatural” for participants. Taking away a participants’ sense of comfort will hinder test results as they feel pressured to do things a certain way. This can cause more errors than had they been in a more comfortable setting.

- Bias. Though this can be limited with a properly run moderated test, we are still human and mistakes, miscues and unconscious body language behavioral cues can transpire. An unintended consequence of this: the moderator could potentially carry over biased, leading information and non-verbal cues to the participant, which will slant results.

Though most of these drawbacks for in-person testing are found with formal moderated testing, there have been methods developed to lessen some of these disadvantages. Guerrilla testing is just one type of in-person test. This method is pure, unadulterated do-it-yourself ethos in action. The designer simply asks people, more often than not coworkers, to do a quick test. This beats the need to set up a facility to test. Saving a bit of expense.

Another advancement for user testing is dealing with the lack of “comfortable” lab settings. This hindrance was overcome by moving the testing methods to a remote moderated system.

Remote User Testing

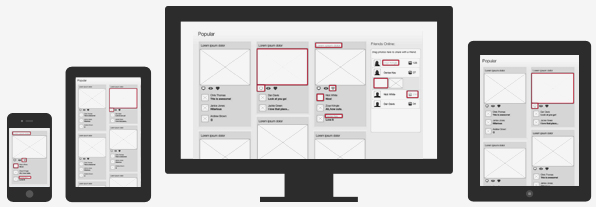

Remote moderated testing allows the participant to sit at their own computer in their own home and take your tests, you proctor the test via software that allows you and the participant to view one another.

This alleviates any need for rental labs or travel expenses. However, you still need to allocate the proper software and set aside specific time slots. But we don’t always have spare time to waste for this, especially as deadlines loom.

We have to start planning for more cost-efficient, time-effective testing. We need to take all the cons of guerrilla and moderated testing, and turn them into opportunities. At the same time, we need to take the pros of each, mashing them into a single type of user test.

Asynchronous (Unmoderated) User Testing

This term/idea/concept is so new to the world of user testing that the word “unmoderated” gets that vicious red-squiggly underline when it’s input into most typing prompts.

With unmoderated user testing, willing participants check out your web design from a remote location so you can attain quantitative data — by tracking assigned tasks.

The remote locations that participants choose to test at can be as comfortable as their living rooms.

By giving the participant the relaxation of performing the test in real life locations, it relieves any tension they might have in an artificial moderated testing environment. Aside from non-artificial locations, users can achieve context through their ability to use phones and tablets during these tests.

In other words, they can test anywhere and on any device.

Unmoderated user testing is not proctored. Using software that tracks and records every move, allows remote testers to make notations and observations that an in-person moderator would typically make. And the participants don’t have to be in the same room as a moderator. They can take the test any time of the day, whether they be on the far side of the world or just across the street.

And for the financially minded, this is a real boon because there’s relatively low overhead since you don’t have to set up a lab or conduct the test yourself. That means, your return-on-investment looks much larger in the greater picture of your business.

For comparison, it was noted that the total moderated usability test cost, of even a low-end test, would be estimated at about $10,500 in 1994 — adjusted for 2012 is approximately $16,400.

That’s one hulking chunk o’ chicken feed. With an unmoderated user test, you’re eliminating the need to rent or reserve a lab. You’re saving time since your presence isn’t required, so you can get other tasks done in your day. By not traveling, as in the case of some moderated user testing, some travel by airplane is necessary — that’s a lot of time and money alone. With travel, there is the propensity of the damaged or lost equipment.

To make the long story short, unmoderated testing not only saves money and time, but you get more results and are able to draw much actionable data as well.

An All-In-One Testing Tool

At Helio , we like to stay ahead of the curve, which is why we’ve built Helio for everyone to take advantage of unmoderated user testing (sorry for the shameless plug). The app offers a “responsive testing experience” (you can test on multiple devices whether it be on the phone, tablet, laptop, desktop, etc.).

Though each of these types of tests has its individual set of positive and negative characteristics, there is one product from all the tests that we need: RESULTS. With these results, we can begin to factor how to proceed with our designs. How we determine how to proceed is with the use of confidence intervals.

Confidence Intervals

Once all the data has been recorded and charted, we implement the use of confidence intervals because it’s impossible to sample the entire world of possible users. This algorithm estimates just how likely our garnered sample size results will apply out in the wild.

Thanks to these intervals, it does not matter if your sample size is 20,000 or 20. All data is actionable. In doing so, we can make sure we are confident enough to go forward with certain design decisions for more favorable usability.

Unmoderated User Testing and You

Step By Step: Conduct Your Own Unmoderated User Test

To begin your unmoderated user testing, you should first develop succinct, easy-to-follow questions and tasks that you would like your participants to answer and complete.

The need for clear assigned tasks is paramount. If your participants don’t understand a question or task they cannot ask for help or explanation. Keeping your participant free of uncertainty is vital.

If we learned anything from Castlevania II, it’s that non-linear and cryptic instructions will definitely hinder your participants’ experience to the point of unconstrained frustration.

Let’s Talk it Out

Since you, the would-be proctor of testing, are not present with the unmoderated testing model, an important element for your test is to encourage your participants to think out loud.

By recording the participant and having them verbalize their actions as they proceed with their tasks or questions, you can begin to understand user behavior from an unbiased perspective. These opinions help you sculpt a better, more usable design from more than just the quantitative test feedback.

Though not all unmoderated user testing supports visual or audio recordings of participants (yet), you can still utilize text inputs throughout the test in order to gain those qualitative results.

Getting Your “Students”

That’s right, we have to have participants in order to carry this testing thing out. There are several sites or resources you can check out to enroll such participants, but don’t trouble yourself with scouring the vast web for the best prices or best candidates.

We have our own network of user testers called Enroll. The network is chock-full unbiased and demographically diverse participants to use at your whim. No need to search high and low for willing candidates. One theory to keep in mind, with how many participants you choose with your test and how they work into your budget, is that of Jakob Nielsen’s five user theory.

The Five User Theory

Nielsen explains how when testing usability, as soon as your first participant completes their test, the feedback contains approximately a third of everything you need to know about the usability of your design.

After each round of testing, the results contain less and less new information regarding your designs. There is just more and more overlap of the first noted problems. By the fifth test completion, you should have just about 85% of your usability problems documented. In order to understand 100% of your usability, Nielsen says that at least 15 participants are more than likely needed. However, that many testers for one test is a waste of your precious resources (aka time and money).

The idea with the testing is to document opportunities for success with better design rather than just harping on each and every weakness. After your five participants finish their tests, that’s when you should revamp your design and then test again. More often than not, you’ll catch the 15% that still needs addressing in the next round of tests.

Rinse and repeat as needed — redesign and retest as needed.

Nielsen’s five user theory presents a strong case for those working on a budget, but still want the appropriate feedback on their site iterations. You can even use the theory to save on money. That’s because you won’t waste dollars on unnecessarily large usability tests.

Time for the “Final Examination”

Now that you have squared away some participants, it’s time to execute the test itself.

There are two different ways to connect with your participants. We can have the participants either connect to a site that carries out the test, or have them download software which implements the test.

Once you decide which avenue you’d prefer, it’s a simple matter of uploading what you want the participants to test and then inputting the tasks and questions. Then simply direct your participants to the link or software for them to use. From this point, you can kick back and enjoy a nice cold beverage of your choice while the software records the tests and you begin to see the results and feedback trickle in.

Let the Computers Work their Magic

During your relaxation period, the software will be recording the workflow of the participants. You can see how they clicked and where they clicked through the design to fulfill their given tasks and questions. This is important to see how easy it is for first time users to navigate through your design and see if the usability is as functional as you had originally desired.

Additionally, the software will record a meaningful batch of metrics. These quantitative metrics include:

- Task completion rates;

- Time on task;

- Time on pages;

- And web analytic data (web analytic data includes the participants choice of: browser, operating system and screen resolution)

Basically, each of these aspects are just a more in-depth analysis of the workflow. By looking at the time spent to complete tasks, regardless of completed correctly or not, you can determine how effective your design is for the participants.

Because of the flexibility for the tester, this testing model is quickly gaining a following with those designers who are staying up-to-date with the most advanced, progressive-thinking means of maintaining their prominence on the Web.

Here’s to Championing a New Era of User Testing

Unmoderated user testing is paving a new path for less expensive and less time-consuming testing methods, all the while providing just as reliable data and results as the more conventional methods have provided.